How Snowflake is solving today's problem and capturing tomorrow's opportunity

An overview of snowflake's data platform and data cloud.

In today’s post, I will be talking about Snowflake. How Snowflake is solving an important problem that data engineering teams are facing today. Also about how Snowflake is laying the foundations to capture a bigger opportunity that is still evolving. This will be part 1 of the article on Snowflake.

Part 2: More detailed research in data platform, cloud data, use cases

Part 3: Growth, Financials, Risks

TL:DR

Data is an important asset. How data flows within and outside a company will determine their survival.

Data has evolved - structured, semi-structured, un-structured data. Volume and Velocity of data generation has also increased exponentially.

This data evolution demands better data engineering practices and places new demands on data warehousing architecture.

AI/ML, decentralization, and blockchain adoption will force companies to adopt a cloud-first, cloud-only approach.

Let’s look at why data is important both within the company and outside the company.

Data is the new oil - Data is the new gold.

Within the company:

Data is increasingly becoming an important asset for organizations. We are seeing companies that are using data to build their competitive advantage or moat around their business model. Companies like ZoomInfo (snowflake customer), Upstart are very good examples. At the core of Upstart’s business model is their Artificial Intelligence (AI) which determines which customer should get the loan and which customer shouldn’t get the loan. And at the core of AI is “quality data”. We will cover how snowflake enhances the quality of data in another section. Upstart was mentioned only to show how data-first companies can differentiate themselves from competitors. Based on publicly available data, it is unknown if Upstart uses snowflake.

Outside the company:

How data flows outside among suppliers, partners, and customers will be more important in the coming years.

In an API world or/and decentralized world, there will be an increasing need for companies to exchange data. Data exchanges among organizations will become a necessity rather than a “good to have” feature. Companies will also be able to monetize (new revenue stream) their data assets. For example, if I’m a company selling weather data sets, I could sell the same data to the retail industry that wants to predict the footfalls in their shops, to the insurance industry that wants to predict their premiums and to the travel industry that predicts the demand.

Snowflake’s data marketplace is a platform model for that brings together data consumers and data producers and facilitates exchange. I personally like platform plays such as Apple’s app store that brings together app developers and consumers, The Trade Desk ( Digital ad buyers and sellers), Fiverr (Freelancers and Enterprises) etc. I will be writing a separate article on platform plays.

To summarize, think of snowflake as a software infrastructure company that is building

Elevators with a company that makes it easier to DISCOVER and ACCESS data spread across multiple floors - eliminating data silos or unlocking the value in hidden data.

Highways between/among the companies that make it easier to SHARE data with the outside world and monetize the data.

Snowflake will be at the heart of every company that will survive this data revolution.

Qualities that define data:

First, let us look at the qualities of data before we look at the evolution of different models of Business Intelligence. BI used to be the name assigned to teams that extract insights from data but these days they are referred to as Analytics/Data science teams.

Data Accuracy

Data Validity

Data Consistency

Data Timeliness

Data Completeness

There are also other qualities like data velocity - rate of data generated, data volume - volume of data generated, and data variety - structure, semi-structured and unstructured data

Data within an organization is not at rest. It is constantly transforming as it flows across various systems from different departments, as the systems interact with the data (read/write) and the data also leaves behind copies and metadata behind as it moves through the system.

“all data are equal but some are more equal than others”.

Quality data is more valuable than data that doesn’t have the above qualities. The quality of business reports, business insights, and business decisions depends on the above qualities of data.

How does Snowflake enhance the quality or prevent degradation in data quality?

Snowflake is a ONE integrated CLOUD platform. This architecture design of having every data in one integrated platform to unify, integrate, analyze and share data enhances the quality of the data.

Evolution of Data Engineering architecture:

Understanding the evolution of data engineering is important to grasp the size of the problem that snowflake is trying to address.

Data Warehouse model

Earlier, companies relied on the Data warehouse model in which data went through the ETL pipelines (Extract, Transform, Load) to create a warehouse.

The main problem that companies were trying to address was : how do i extract insights and generate reports without causing any performance issues to my live systems (CRM, Billing etc).

Solution:

Create a data pipeline from the source systems to ingest data into the warehouse. Apply complex data transform rules to convert the formats, create views to get the data ready for reports. These data warehouses had dedicated storage and compute as per reporting requirements.

Benefits:

BI and reporting teams were happy with this model since they had dedicated environments and didn’t have to listen to complaints from other systems teams about how their reporting queries were causing performance degradation to live systems.

Drawbacks:

Building ETL pipeline is a very costly and time-consuming process(usually months or years). By the time the pipelines have been built, source systems might have changed or there may be more systems to integrate.

Time and cost drastically increase when the number of enterprise systems increases. In today’s world, using multiple enterprise systems for the same function like CRM, HR, Finance is very common. i.e companies could have 2+ CRM systems, 2+ HR systems.

Another costly drawback is that value/business benefits such as reports, insights are seen only at the end (after months), which is big no-no in today’s agile world and in a world where Time to market is crucial.

Scaling was very difficult. Had to decide the storage and compute upfront since these were on-premise solutions ( not cloud solutions).

Data quality issues - There was always a delta between the data in the live system and data in the data warehouse. BI teams were working with old data - usually 1 day to 1 week old data.

There are various other technical disadvantages that lead to either increase in time( delay in extracting insights) or/and increase in cost (developer time, missed opportunities in the market).

Data Lakes

As the nature of data changed from structured to more semi-structured data/unstructured data(data variety) and as the velocity and volume of data generated increased exponentially, there was an increasing demand for the data lakes concept.

Main problem:

How do I accommodate structured, semi-structured, unstructured data and how do I store data that is increasing exponentially.

Solution:

Create data lakes where I can dump all kinds of data. Data lakes addressed the storage problem associated with data velocity, data volume, data variety but had its own limitations.

Data lakes introduced a new problem- data staleness. Data lakes didn’t support data transactions. In other words, data was at rest in a lake. Data in motion is more valuable than data at rest (Confluent, Apache Flink should strike a bell here). There was increasing demand for data lakes to support transactions - allowing simultaneous reading and writing of data.

Some enterprises have experimented by using data lakes + data warehouse, which resulted in a new set of problems: data quality, data redundancy, and the usual problem - increase in developer time to copy data across systems and check for quality issues.

One of the senior executives from snowflake (senior “snowflake” executive sounds bad) while talking about the problems that lakes pose made a statement that is easy to comprehend. He said that a data lake is a like a garage with endless storage capability. Think of the garage in your house. And you keep filling the garage and it grows. Now, when you want to retrieve something urgently that’s when the headache starts. Lakes address only the storage problem but what enterprises need is an organized garage that makes it easier to retrieve articles and as well as facilitates easy movement, addition, and deletion of articles into the garage.

Enter Lakehouse

The main problems at this stage were:

How do I allow the data within the lake to change/transform - accommodate concurrent reading and writing of data?

How do I make retrieval easy without causing data quality issues?

How do I scale quickly as my needs change?

How can I access data quickly - more real-time, more event-driven/streaming data

How can I draw insights real time without any delay

Solution:

The increasing demand for a platform that combines data warehouse and data lakes benefits led to the concept of lakehouse. Lakehouse has the below benefits

supports concurrent reading and writing of data

built-in data governance support

decoupling of storage and compute - easy to scale

end-to-end streaming i.e data in motion to support real-time reporting and real time data applications.

Snowflake is a cloud platform that bundles the benefits of a data warehouse and a data lake in a single unified platform.

As Jim Barksdale, former CEO and President of Netscape, said

“There are only two ways to make money in business: One is to bundle; the other is to unbundle."

Snowflake is a lakehouse that is bundling data lake and data warehouse capabilities into a single platform and building much more capabilities for the future.

There are other evolving categories in data engineering such as ETL dataflow (fivetran), reverse ETL( hightouch, Census), and more real-time/streaming data demands (confluent). Those are topics for another day.

These categories are smaller when compared to the Data platform space.

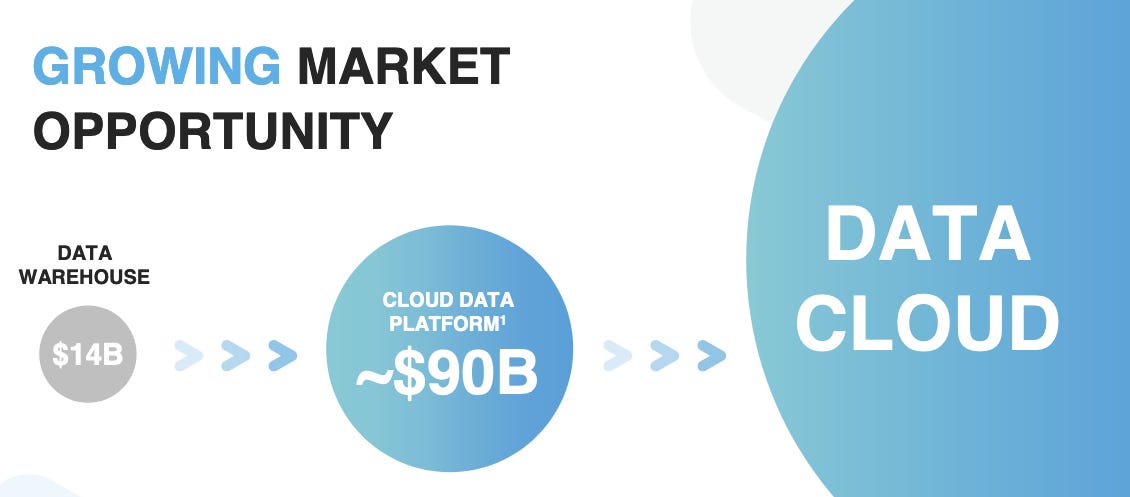

Size of the opportunity

Now that you have an understanding of the problem that snowflake is trying to address, let us look at the size of the opportunity.

Snowflake executives don’t like it when investment analysts refer to them as a “Cloud Datawarehouse” company. They want to be called a cloud data platform or data cloud company. And, I agree with Snowflake.

So far we have looked at only the cloud warehousing opportunity and only briefly touched upon the second big opportunity that Snowflake is trying to address. That is the data cloud.

Why does data cloud matter?

As I mentioned earlier, the ability of a company to share data with the outside world and the ability to use data from the outside world will be critical for their very survival.

The quality of insights that you derive depends on the quality of Artificial Intelligence/Machine Learning (AI/ML). And the quality of an AI/ML model depends on the quality of data that it has been trained upon. Data within the organization won’t be sufficient to train your AI/ML models.

Decentralization/Blockchain will also exponentially increase the rate of data exchanges between the companies.

Keeping data on-premise will be looked down upon even for critical data. Cloud will become the default option because only cloud data platforms can support such scale and such exchanges.

Moving from on-premise software to cloud-first, cloud-only “value migration” is already in full swing today.

I’m currently working on the part 2 and part 3 of this article and have a few more interesting companies that will be talking about. Subscribe to be notified when the post goes live.

Part 2: More detailed research in data platform, cloud data, use cases

Par 3: Growth, Financials, Risks

If you liked this post, consider sharing this post with one other friend of yours.